Introduction: Is ChatGPT Multimodal

Can ChatGPT Process Images and Text Together? Multimodal AI Answer

Is ChatGPT Multimodal: Over the past few years, the transition off of text has become one of the largest strides in AI and, in particular, in the context of language models. The users have now demanded models to be able to see, hear and even create pictures, sounds and even videos.

This is the direction that ChatGPT created by OpenAI developed. By 2025, most of its versions and tools are multimodal. However, what that is, what versions are really multimodal, and how good are they? This guide provides the answers to these questions in detail that helps you know whether ChatGPT is multimodal at the moment, how it impacts the users, and what to expect in the future.

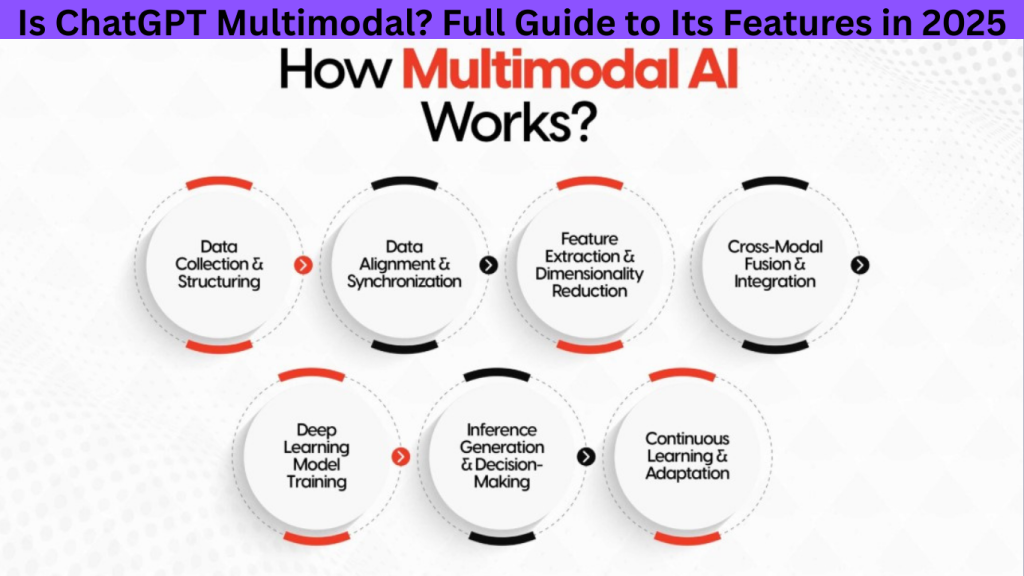

What “Multimodal” Means in AI Context: Is ChatGPT Multimodal

To set the foundation, “multimodal” in AI means a model or system that can accept and/or generate multiple types of data or signals:

- Text (plain language input/output)

- Images / vision (upload pictures, screenshots, photos; interpret them, analyze diagrams)

- Audio / speech (listen to voice input; possibly generate voice or respond via speech)

- Video (less common, more challenging due to size, computation)

- Other file formats (PDFs, slides, charts, etc.)

A fully multimodal system may be able to input and output across modalities (e.g. see image → generate image, or hear speech → respond with voice). Partial multimodality means limited to input or limited output.

History – ChatGPT’s Path to Multimodality: Is ChatGPT Multimodal

Here’s a quick timeline of how ChatGPT gradually became multimodal:

- Plugins, paid tiers, and image tools (e.g. DALL-E) were introduced first to allow image generation.

- Models like GPT-4o introduced “omni” capabilities: text, image, audio/speech input/output. Wikipedia

- Follow-ups like GPT-4.1, o4-mini, etc., expanded vision input capabilities, larger context windows, and tool integrations. The Verge+2Data Studios ‧Exafin+2

- In 2025, GPT-5 has been released with stronger multimodal benchmarks. OpenAI+1

So the evolution has moved from text-only to input plus some output in other modalities, then more robust features like voice, file uploads, and visual understanding.

ChatGPT Models in 2025 & Their Multimodal Capabilities: Is ChatGPT Multimodal

Here are important ChatGPT / OpenAI models in 2025 and what “multimodal” means for each.

| Model | Multimodal Input? | Multimodal Output? | Key Strengths | Limitations |

| GPT-5 | Yes: image, audio, vision input. OpenAI+1 | Yes: supports advanced reasoning, non-text functionality under common interface. OpenAI+1 | Strongest reasoning across modes, handling visual & audio tasks; state-of-art benchmarks. OpenAI+1 | May still have latency on heavy multimodal tasks; access restrictions for free users; possible costs. |

| GPT-4.1 / GPT-4.1 mini / nano | Vision input (images, diagrams) yes. Data Studios ‧Exafin+2The Verge+2 | Mostly text output; image generation sometimes via tools. Data Studios ‧Exafin+1 | Improved context windows; better performance on instruction following and coding tasks. The Verge+1 | Does not always generate in audio/voice; some output types limited; free users may have constraints. |

| o4-mini | Yes: vision and image support; input and some outputs (like image generation via tools) Data Studios ‧Exafin | Output mostly text + tool-based image generation, not always voice. Data Studios ‧Exafin | Fast, efficient; better throughput; suitable for many use cases that don’t require full heavy duty multimodal work. Data Studios ‧Exafin | Not as powerful for complex audio or video tasks; some tasks still better handled by larger models. |

| o3 | Similar to above: supports image input and some analytical tools Data Studios ‧Exafin+1 | Mostly text or tool-aided visual output. Data Studios ‧Exafin | Strong reasoning, stable for many professional uses. | Less optimized for speed in multimodal; resource usage higher. |

| GPT-4o | “Omni” model: can process images, audio/speech, text input. Wikipedia+2OpenAI+2 | Can generate voice responses (via advanced voice mode), image generation via tools, etc. Wikipedia+1 | Among the earlier models to support multimodal in a more “round-trip” way. Good for tasks where seeing, hearing, speaking matter. | Some features may be behind paywalls; fewer context limits; older models may be phased out. |

Key Multimodal Features: Is ChatGPT Multimodal

Is ChatGPT Multimodal: Here are the notable multimodal features available as of 2025, how they work, and who gets access. These are what make ChatGPT more than “just text” now.

Text, Image, Audio Input

- You can upload images, screenshots, diagrams, etc., and ask questions about them. For example: “What does this chart show?”, “Write alt text for this photo.” (GPT models like o4-mini, GPT-4.1, GPT-5 support this). Data Studios ‧Exafin+2The Verge+2

- Voice input / speech: You can speak to ChatGPT (via supported apps or voice-mode) instead of typing. It can listen, transcribe or interpret, then respond. Descript+2Data Studios ‧Exafin+2

Voice / Speech Interaction

- Advanced voice mode: ChatGPT can “speak back” via TTS (text-to-speech). That means if you ask via voice, you can hear the response. Useful for more natural conversation, accessibility. Descript+1

- Record Mode: For macOS desktop app (and soon elsewhere) ChatGPT supports “Record Mode” where you can record voice notes, meetings, then it transcribes and summarizes them. Very useful for meeting summaries, brainstorms. OpenAI Help Center

Image Generation & Visual Outputs

- Tools like DALL-E (and newer image generation models integrated) let users generate images via prompt within ChatGPT. For many models, image outputs are possible (for Paying users or those who have access). Data Studios ‧Exafin+1

- Visual understanding: reading diagrams, interpreting charts, recognizing objects in images. Not perfect, but improving. Data Studios ‧Exafin+1

File Uploads & Analysis (PDFs, Diagrams, etc.)

- Upload documents (PDFs, slides, spreadsheets) and get summaries, extraction of data, comparisons. Useful in research or business settings. OpenAI Help Center+2Data Studios ‧Exafin+2

- Handling multiple files; comparing contents. This helps with research workflows. OpenAI Help Center+1

Context Window & Long-Document Support

- Larger context windows: Certain models (e.g. GPT-4.1, GPT-5) have greatly expanded token limits. They can “remember” longer conversation & interpret longer documents/images in one go. The Verge+1

- Better memory across sessions (for paying users) so that past interactions matter. This helps the multimodal experiences carry continuity. Data Studios ‧Exafin+1

5Record Mode, Deep Research & Tools

- Record Mode (macOS, etc.): Capture voice-notes or meetings → transcribe + summarize. Great for audio-to-text workflows. OpenAI Help Center

- Deep Research: An agentic tool inside ChatGPT doing longer, more autonomous research; can handle images and documents as inputs. Wikipedia

- Connectors, custom GPTs, external tools etc., let you combine modalities (e.g. fetch a document, get data, analyze image) more seamlessly. OpenAI Help Center+2Data Studios ‧Exafin+2

Watch The Video: Is ChatGPT Multimodal

Use Cases – What Multimodal Enables: Is ChatGPT Multimodal

These features are not just bells and whistles—they open up many real-world possibilities.

Education & Research

- Students can take a photo of handwritten notes or diagrams and ask ChatGPT to explain.

- Upload PDFs or research papers, ChatGPT extracts key findings, diagrams, tables.

- Learning languages: speaking practice, hearing pronunciations, reading text + visuals together.

Creative Work: Design, Art, Visual Storytelling

- Artists or designers can give prompts, generate visuals; also show reference images for style matching.

- Writers working on visual content (graphics, comics, etc.) can combine images + text seamlessly.

Business & Productivity: Meetings, Reports, Summaries

- Use voice recording in meetings → summarize, extract action items.

- Upload slides / charts → generate reports, explanations.

- Teams using custom workspaces utilize multimodal tools to collaborate visually (diagrams, flowcharts) and via speech.

Accessibility & Inclusion

- For visually impaired users, voice input/output helps; alt text generation; image descriptions.

- For people with limited typing ability, speech input is helpful.

Technical Tasks: Coding, Engineering, Diagrams

- Uploading architecture diagrams, circuit diagrams, blueprints → analysis, suggestions.

- Code + image combination: e.g. screenshot of error message + code / log file helps debugging.

- Visual math problems: graph, geometry etc.

Limitations & Challenges of Multimodal ChatGPT: Is ChatGPT Multimodal

While the capabilities are impressive, there are trade-offs and issues that users must know.

Accuracy & Hallucination

- Models sometimes misinterpret images (ambiguous content, low quality, complex visuals).

- Hallucinations still happen: generating incorrect information about what’s in an image or what it means.

Privacy & Security

- Uploading images or voice may include sensitive data; risk if model misuses or leaks.

- Voice recordings: handling of personal conversations must be secure; legal/privacy concerns in some regions.

Resource & Compute Costs

- Multimodal tasks are heavier: processing images, voice, larger contexts uses more compute → slower responses or usage limits.

- For free tier users, many features are restricted. Paid tiers often required for advanced multimodal output or higher usage. nexos.ai+1

Input / Output Constraints

- File size limits, number of uploads per chat.

- Some modalities only input (you can show image but may only get text reply). Not always full output across all modes.

- Video support is still limited or preview / experimental in many cases.

Ethical / Bias Risks

- Models may misinterpret cultural visuals, produce biases.

- Generated content risk: misuse, deepfakes, inaccuracies.

- Voice / speech risks: accent, dialect, fairness.

How Multimodal ChatGPT Compares to Other AI Tools (2025): Is ChatGPT Multimodal

To understand how strong ChatGPT’s multimodal capabilities are, it helps to compare with others.

- Gemini (by Google): Also has strong multimodal features, especially in visual search, integrating image + text queries. Some users find Gemini’s image-based search easier. Tom’s Guide

- Specialized tools like image recognition services, speech recognition tools, text-to-video or video generation models may outperform ChatGPT in narrow tasks.

- But ChatGPT’s advantage is breadth: many modalities + many tools + integration + strong text reasoning + conversational interface.

Tips: How to Make Best Use of ChatGPT’s Multimodal Features

If you want good performance and fast results, here are practical tips:

- Give clear context: when uploading an image or voice clip, explain what you want (e.g. “Describe this chart”, “What are the trends?”).

- High-quality inputs: good resolution images, clean voice recordings → better interpretation.

- Specify output format: ask for bullet points, summaries, diagrams, etc. Helps the model understand expected response.

- Use paid tiers when needed: to get higher limits, better models, voice output etc.

- Break big tasks into parts: upload large document + ask summary + then ask deeper questions.

- Verify outputs: especially for visual or audio content, double check facts.

Will ChatGPT Become Fully Multimodal? What to Expect Next

Here are likely directions and what users can expect:

- More robust audio/voice output: better voice synthesis, more natural speech, more languages, dialects.

- Video input/output improvements: more support for video generation, understanding, editing.

- 3D / spatial modalities: possibility to interpret 3D models, environments.

- Improved context, memory & personalization: retaining visual + audio context over sessions.

- Lower-cost, more accessible multimodality: features moving to free tiers, more efficient models (smaller but capable).

- Safer, more accurate vision + speech: reducing hallucinations, bias, ensuring privacy.

Is ChatGPT Multimodal: How can I make money using Multimodal AI?

Money-making opportunities:

1. Content Creation Services

- YouTube automation → Use multimodal AI to generate scripts, create visuals, and even voiceovers for faceless channels.

- Blogging & SEO → Generate long-form SEO content with images and infographics.

- Social media posts → Create TikToks, Instagram reels, and image captions with AI.

💡 How you earn: Ad revenue, affiliate links, sponsored content.

2. Freelancing with AI Skills

- Offer AI-powered design (logos, infographics, social posts).

- Provide AI video editing or captioning services.

- Sell AI-based transcription + translation services for podcasts, YouTube creators, and businesses.

💡 Platforms: Fiverr, Upwork, Freelancer.

3. E-Commerce & Print-on-Demand

- Use multimodal AI to design unique T-shirts, mugs, posters.

- Create AI-generated product images for eBay, Etsy, or Shopify.

- Use AI to generate marketing visuals and descriptions.

💡 Earnings: Product sales + branding services.

4. Education & Coaching

- Build AI-powered courses (with text + video + visuals) and sell on Udemy, Teachable, or Gumroad.

- Offer AI tutoring services for students, language learners, or professionals.

💡 Extra: Create eBooks with AI images and sell them on Amazon Kindle.

5. Business Tools & Automation

- Develop chatbots with multimodal support for websites.

- Offer AI-powered customer support (handling text + voice queries).

- Build AI product demos for SaaS companies.

💡 Earnings: Subscription fees or service contracts.

6. Stock Media & Licensing

- Create AI-generated stock photos, illustrations, and videos.

- Sell them on platforms like Shutterstock, Adobe Stock, or Pond5.

💡 Bonus: Package themed collections (e.g., business icons, holiday graphics) for higher sales.

7. Affiliate & Marketing

- Write product reviews (with AI-written text + AI images).

- Create engaging multimedia ads and landing pages.

- Use AI to analyze customer behavior across text, image, and audio data for better ad targeting.

💡 Earnings: Affiliate commissions + ad revenue.

Conclusion: Is ChatGPT Multimodal

So, is ChatGPT multimodal in 2025? The answer is: Yes—but with nuances.

Is ChatGPT Multimodal: The latest versions of ChatGPT (GPT-5, GPT-4.1, o4-mini, GPT-4o) have high multimodal abilities. It is able to read pictures, voice recognition, handle PDF documents and diagrams, create or assist with picture-based output and possesses software to transform speech, summarize music, etc. Nevertheless, not all models (and free plans) are yet fully multimodal (input + output in all modes). Video, speed, cost, privacy and accuracy are still limited.

To users, being aware of what model you are operating on, what plan you are on and what type of modality you require will make you maximize the use of ChatGPT. Many of the advanced features are possible in case you have access to a model such as GPT-5. Otherwise, much of the easier multimodal capability continues to be highly valued.

Frequently Asked Questions: Is ChatGPT Multimodal

Q1. Is ChatGPT fully multimodal (input + output) in all versions?

A: No. Some models accept images/input but only output text. Full voice output or video generation is more limited, often tied to specific models or paid tiers.

Q2. Can I show a picture and have ChatGPT speak about it?

A: Yes, in models with voice mode + image input (for example GPT-4o, GPT-5, etc.), but it depends on region, subscription, and whether the feature is enabled.

Q3. Do free users get multimodal features?

A: Basic image uploads, analysis, some visual understanding tend to be available. More advanced output (voice, video, large context, image generation) often require paid plans. TechCrunch+2nexos.ai+2

Q4. How large is the maximum context window now?

A: Some of the newer models (like GPT-4.1, GPT-5) have much larger token limits than older ones. For example, GPT-4.1 and GPT-4.1 mini support large context (one million tokens in some announcements) compared to earlier limits. The Verge+1

Q5. Is video input / output supported well?

A: Video is more challenging. Input of video or understanding video is emerging but not yet as common or as robust. Some text-to-video tools are being rolled out (e.g. Sora) but with limits. Reuters

Q6. Can ChatGPT generate both images and text at the same time?

Yes. In multimodal models like GPT-5 and GPT-4o, ChatGPT can create written content and generate matching images. For example, you can ask it to write a blog introduction and design a related infographic.

Q7. Does ChatGPT support file uploads like PDFs and spreadsheets?

Yes. Users can upload PDFs, Word documents, or spreadsheets, and ChatGPT can summarize, extract key points, or analyze the data. This feature is especially useful for students, researchers, and business professionals.

Q8. Is ChatGPT multimodal available for free users?

Free users get some limited multimodal features, like basic image analysis. However, advanced multimodal tools such as voice interaction, file uploads, or high-quality image generation are usually reserved for paid subscribers.

Q9. Can ChatGPT understand handwriting or scanned notes?

Yes, if the handwriting is clear. By uploading an image of handwritten notes, ChatGPT can attempt to interpret and convert them into editable text. However, accuracy depends on the clarity of the writing.

Q10. How is multimodal ChatGPT different from traditional chatbots?

Traditional chatbots are usually text-only and rule-based. Multimodal ChatGPT can process and generate text, images, audio, and files, making it far more versatile, natural, and useful in real-world scenarios.

Pingback: How AI Rendering Tools Are Transforming Content Creation in 2025

Pingback: Agentic AI Review: How to Automate Sales & Support 24/7 With Human-Like AI

https://shorturl.fm/HgYAk

https://shorturl.fm/mae3L

Informative article.

Nice

Your point of view caught my eye and was very interesting. Thanks. I have a question for you. https://www.binance.com/register?ref=QCGZMHR6